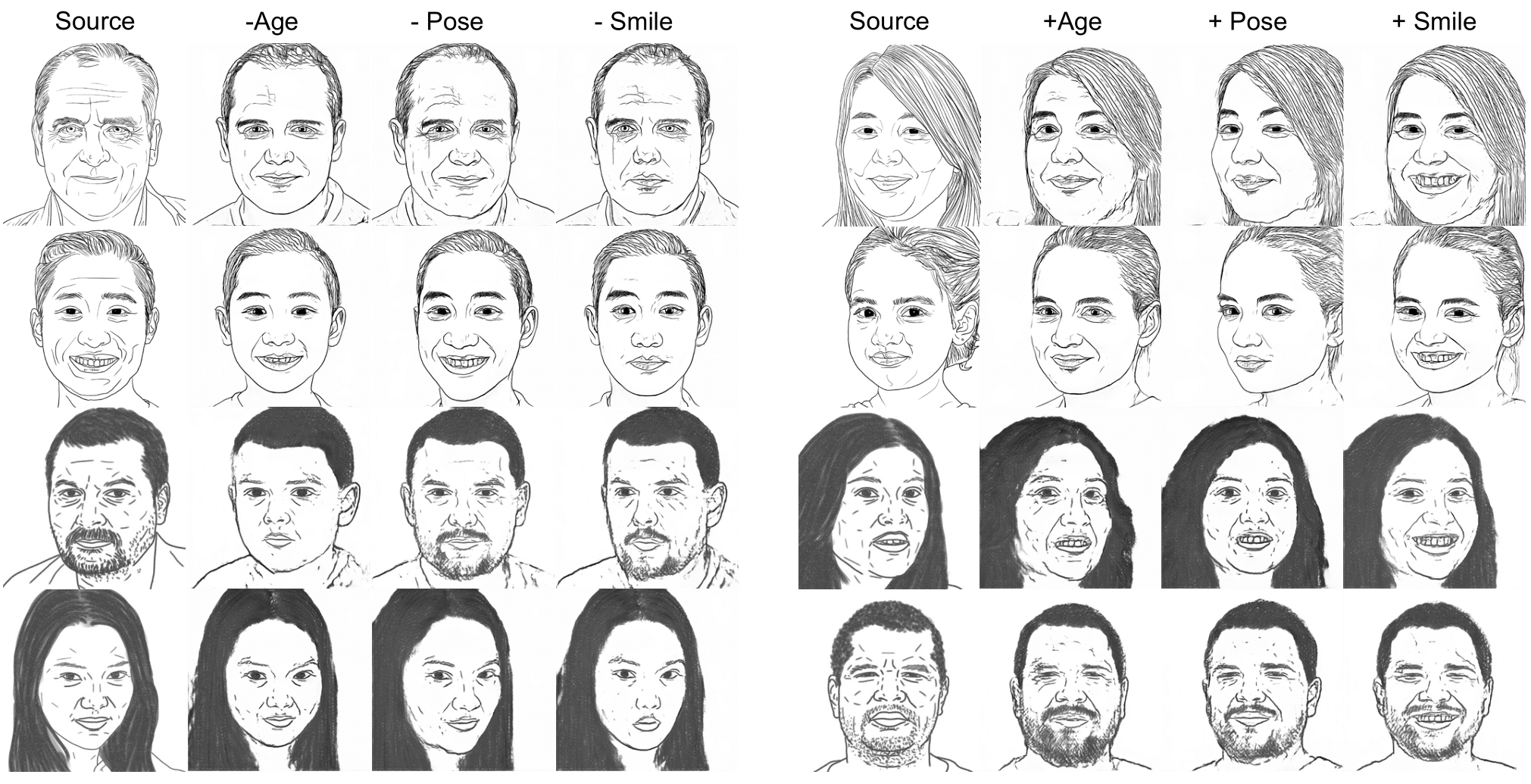

Visualization of StyleSketch results

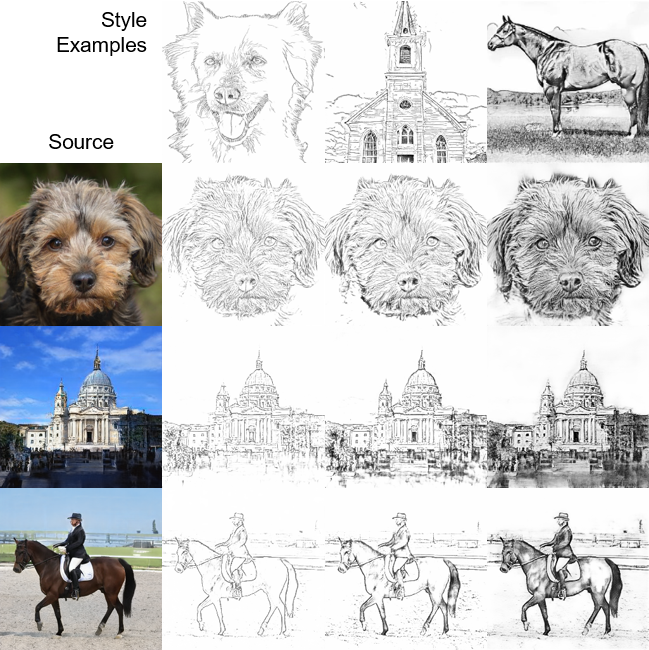

Facial sketches are both a concise way of showing the identity of a person and a means to express artistic intention. While a few techniques have recently emerged that allow sketches to be extracted in different styles, they typically rely on a large amount of data that is difficult to obtain. Here, we propose StyleSketch, a method for extracting high-resolution stylized sketches from a face image. Using the rich semantics of the deep features from a pretrained StyleGAN, we are able to train a sketch generator with 16 pairs of face and the corresponding sketch images. The sketch generator utilizes part-based losses with two-stage learning for fast convergence during training for high-quality sketch extraction. Through a set of comparisons, we show that StyleSketch outperforms existing state-of-the-art sketch extraction methods and few-shot image adaptation methods for the task of extracting high-resolution abstract face sketches. We further demonstrate the versatility of StyleSketch by extending its use to other domains and explore the possibility of semantic editing.

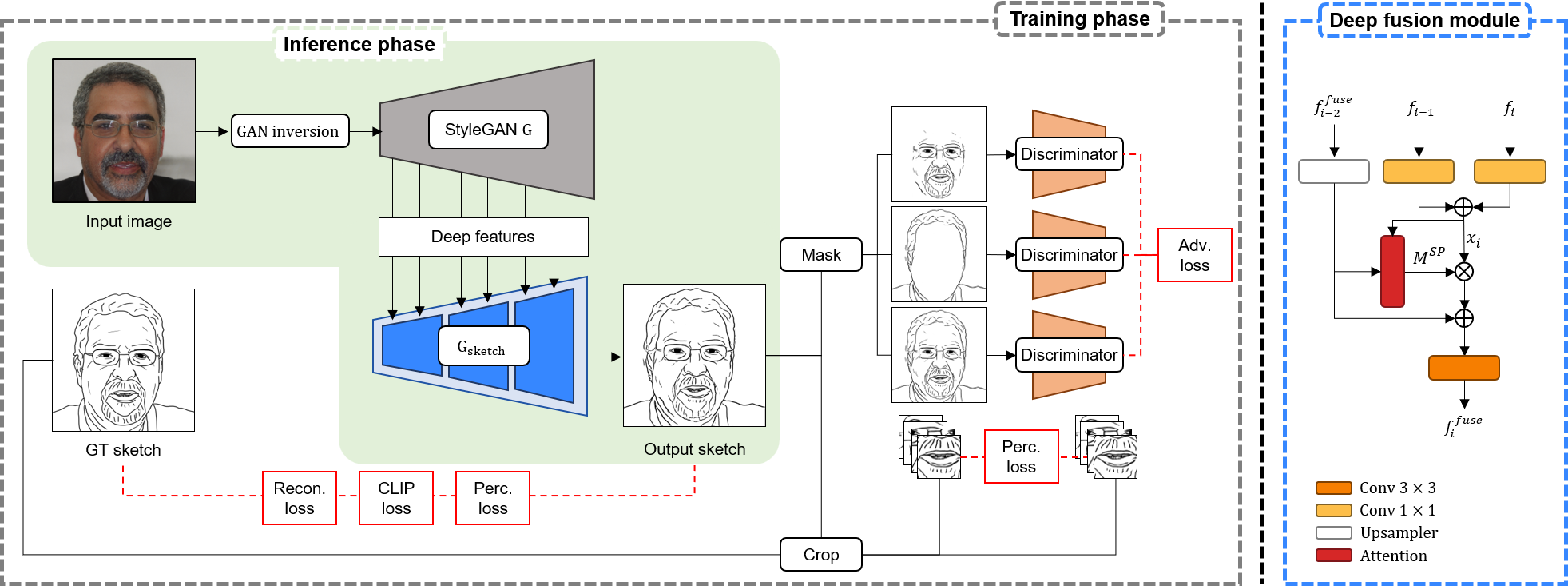

Overview of StyleSketch. To extract a sketch from a face image, we first train a sketch generator that accepts deep features as input. is constructed with the deep fusion module represented on the right side of the figure. After training, we extract sketches by performing GAN inversion of the input image followed by feeding the deep features into .

@inproceedings{yun2024stylized,

title={Stylized Face Sketch Extraction via Generative Prior with Limited Data},

author={Yun, Kwan and Seo, Kwanggyoon and Seo, Changwook and Yoon, Soyeon and Kim, Seongcheol and Ji, Soohyun and Ashtari, Amirsaman and Noh, Junyong},

booktitle={Computer Graphics Forum},

year={2024},

organization={Wiley Online Library}

}